Camera Sensor Technology Explained – Stacked, Partially Stacked, BSI, IBIS, and What It All Means

Paulius Bagdonas

Paulius Bagdonas

Camera sensor technology is one of those topics that sounds complicated but really comes down to a few core ideas. Most photographers interact with the results of sensor design every day without thinking about how the sensor actually works. Shutter speed, burst rate, rolling shutter, autofocus performance, overheating. All of these are directly tied to what is physically happening on the imaging sensor.

In this article I will go through the main sensor tech used in modern cameras, explain what they do in plain language, and then move into the real world implications that actually matter for photography, video, and photogrammetry work. I will also cover IBIS, which is not an on sensor technology in the traditional sense but is closely related and increasingly important.

How a Digital Camera Sensor Works

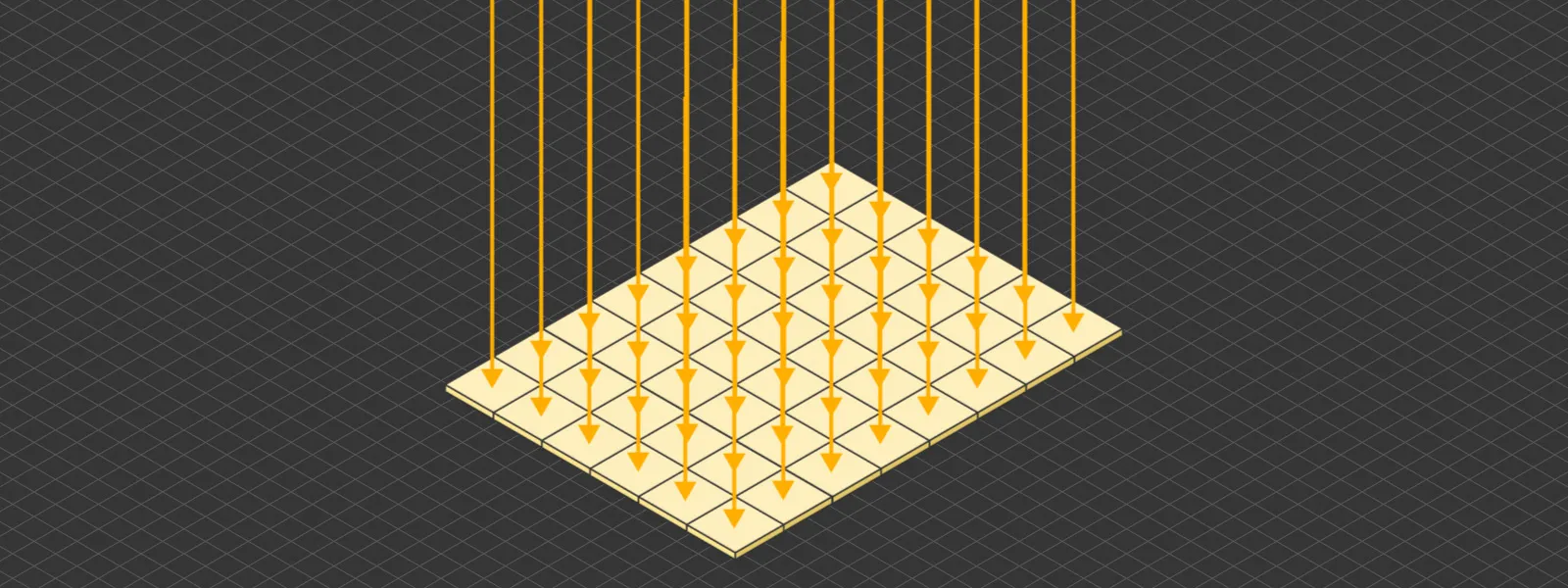

At the most basic level, a camera sensor is a device that converts light into electrical signals. Light enters the lens and hits millions of tiny photosites on the sensor surface. Each photosite generates a small electrical charge proportional to the amount of light it receives. That charge is then read out, converted into a digital value, and assembled into an image.

Think of it like a field of buckets for rainwater. Each bucket collects a different amount of water depending on where the rain falls. After a set period, you go around and measure each gauge. The collection of all those measurements gives you a map of where it rained and how much. The sensor does the same thing, but with photons instead of raindrops, and it does it millions of times per second.

This fundamental process has not changed since the earliest digital sensors. What has changed dramatically is how fast and efficiently the sensor can read out all of that data.

CCD vs CMOS – A Brief History

CCD vs CMOS – A Brief History

Early digital cameras used CCD (Charge-Coupled Device) sensors. CCD sensors read out data sequentially, shifting charges row by row to a single output. This produced clean, uniform images but was slow and power-hungry.

CMOS (Complementary Metal-Oxide-Semiconductor) sensors changed the game by allowing each photosite to have its own readout circuitry. This made readout faster and more power-efficient, which is why virtually every modern camera uses CMOS technology. The tradeoff in early CMOS sensors was more noise and less uniformity, but those issues have been resolved over the past two decades.

Today, the conversation is no longer about CCD versus CMOS. It is about what kind of CMOS architecture the sensor uses, which is where BSI, stacked, and partially stacked designs come in.

BSI – Back-Side Illuminated Sensors

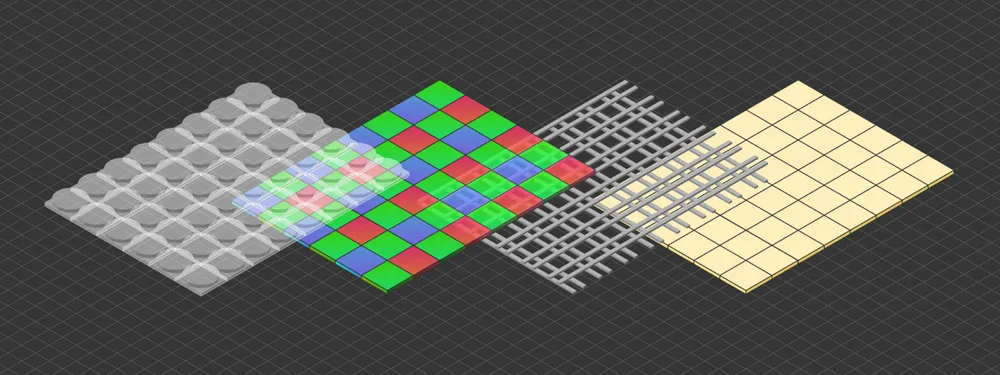

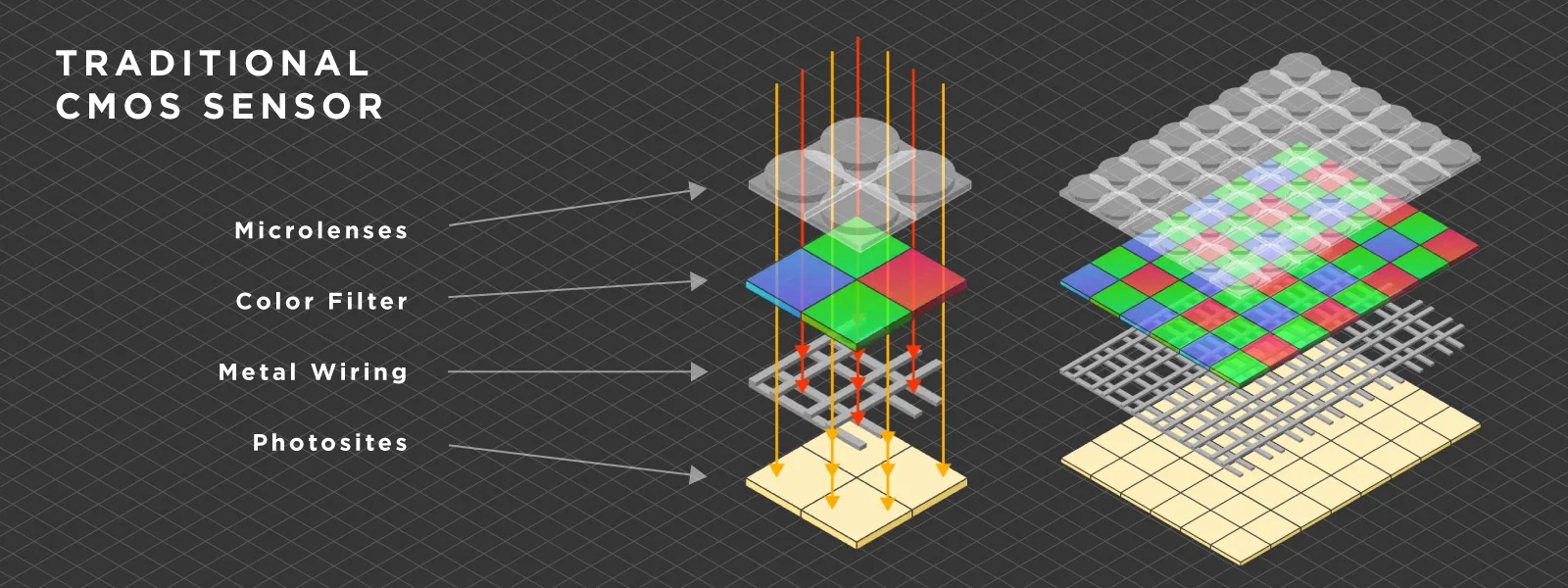

In a traditional CMOS sensor, the wiring layer sits on top of the photosites. Light has to pass through that wiring before reaching the light-sensitive area. This is not ideal because some light gets blocked or scattered.

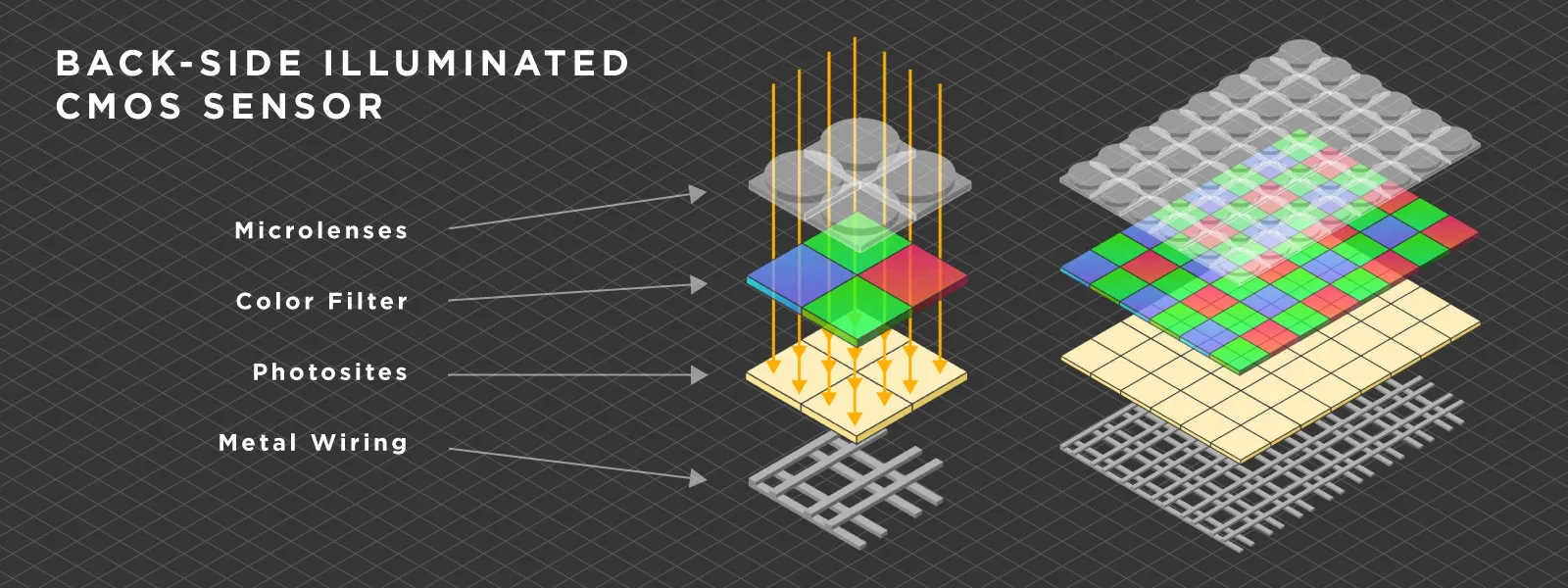

BSI (Back-Side Illuminated) sensors flip the design. The wiring is placed behind the photosite layer, so light hits the photosensitive area directly. The result is better light gathering efficiency, improved low-light performance, and cleaner images overall.

BSI (Back-Side Illuminated) sensors flip the design. The wiring is placed behind the photosite layer, so light hits the photosensitive area directly. The result is better light gathering efficiency, improved low-light performance, and cleaner images overall.

BSI has become the standard for modern camera sensors. Most current full frame cameras, including the Sony A7 IV, use BSI CMOS sensors. It is no longer a premium feature but a baseline expectation.

BSI has become the standard for modern camera sensors. Most current full frame cameras, including the Sony A7 IV, use BSI CMOS sensors. It is no longer a premium feature but a baseline expectation.

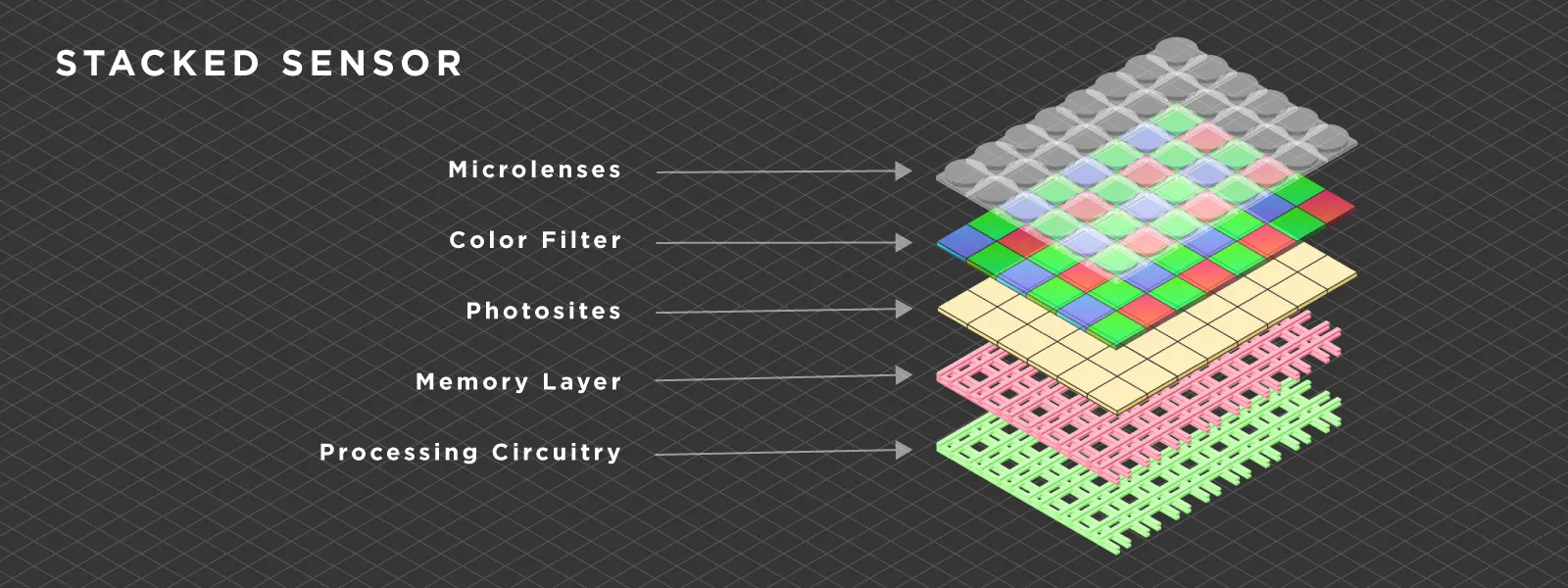

Stacked Sensors – Speed Through Architecture

A stacked sensor takes the BSI concept further by adding a dedicated layer of memory (typically DRAM) directly beneath the imaging layer. This creates a physical "stack" of components: the photosite layer on top, then the memory layer, then the processing circuitry below.

The key benefit is readout speed. With built-in memory, the sensor can dump its entire frame into the DRAM layer almost instantly, and then the processing circuitry reads it out from there at its own pace. Without this memory layer, the sensor has to read out row by row while the next frame is already being exposed, which is slower.

Fully stacked sensors are found in high-end cameras such as the Sony A1, Sony A9 III, Nikon Z8, and Nikon Z9. These cameras can read out the entire sensor extremely fast, which enables features such as blackout-free shooting, very high burst rates, and in the case of the A9 III, a global shutter that eliminates rolling shutter distortion entirely.

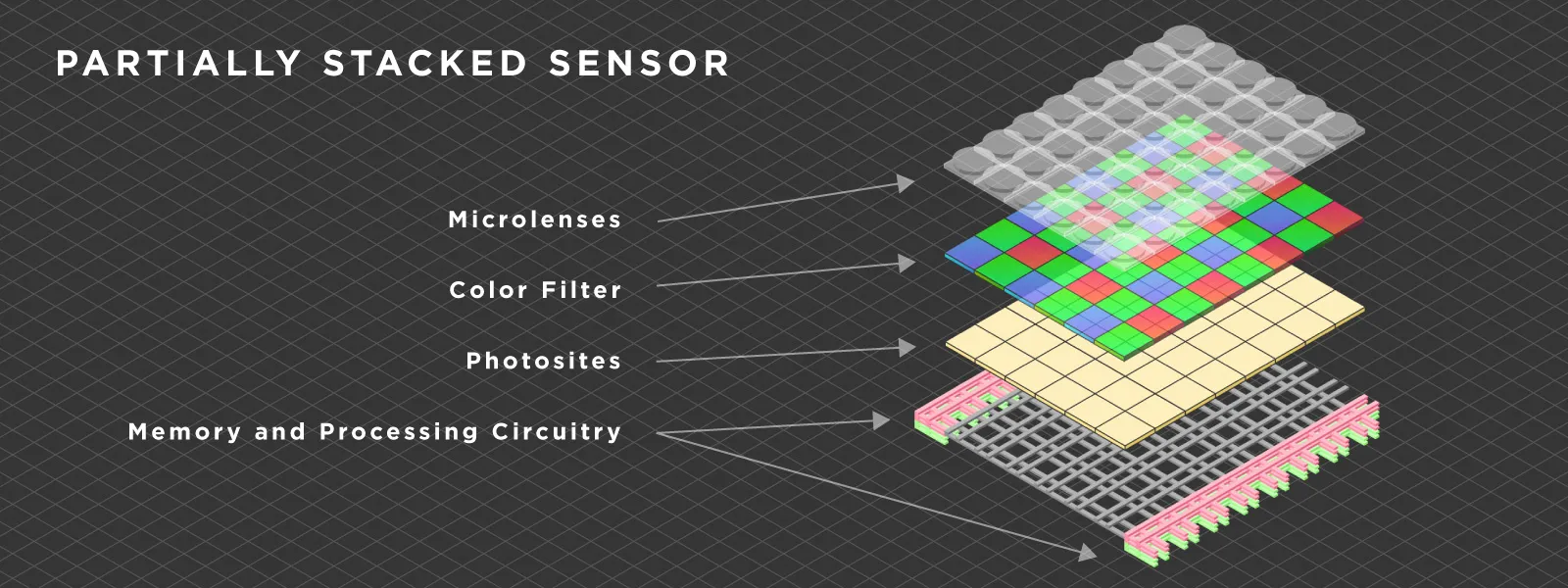

Partially Stacked Sensors – The Middle Ground

Partially Stacked Sensors – The Middle Ground

A partially stacked sensor is a CMOS imaging sensor that integrates some high-speed memory into the sensor stack, but not a full DRAM layer like a fully stacked design. This is a more recent development and represents an important shift in the market.

The result is a sensor that is significantly faster than a standard BSI design, but not quite as fast as a fully stacked one. It sits in between, offering a meaningful speed improvement at a lower manufacturing cost.

The Sony A7 V is the most prominent example. Its partially stacked sensor provides a readout speed of approximately 15 milliseconds in electronic shutter mode. That is fast enough to make the electronic shutter genuinely usable for most shooting scenarios, something that was not the case with the standard BSI sensor in the A7 IV. Canon and Nikon are implementing similar approaches in their respective mid-range bodies.

Partially stacked sensors are significant because they bring high-speed sensor performance to a much wider audience. Not everyone needs or can afford a flagship camera. But the ability to shoot at 30 frames per second with autofocus, use electronic shutter without severe rolling shutter artifacts, and capture high frame rate video. These are features that wildlife photographers, sports shooters, and hybrid creators genuinely benefit from.

Partially stacked sensors are significant because they bring high-speed sensor performance to a much wider audience. Not everyone needs or can afford a flagship camera. But the ability to shoot at 30 frames per second with autofocus, use electronic shutter without severe rolling shutter artifacts, and capture high frame rate video. These are features that wildlife photographers, sports shooters, and hybrid creators genuinely benefit from.

Real-World Implications of Sensor Readout Speed

Rolling Shutter and Electronic Shutter

Rolling shutter is one of the most visible consequences of slow sensor readout. Because a CMOS sensor reads out line by line from top to bottom, fast-moving subjects can appear skewed or distorted. The faster the readout, the less pronounced this effect becomes.

With fully stacked sensors, rolling shutter is essentially eliminated. The Nikon Z8 and Z9, for example, can shoot entirely in electronic shutter mode with minimal distortion. The Sony A9 III goes further with a global shutter that reads all lines simultaneously, removing rolling shutter completely.

Partially stacked sensors like the one in the A7 V do not eliminate rolling shutter entirely, but they reduce it to a level where electronic shutter becomes a practical default for most situations. This is a meaningful shift. It means photographers can preserve their mechanical shutter for longevity while using electronic shutter for silent shooting and higher burst rates.

Burst Rates and Pre-Capture

Faster readout directly enables higher burst rates. The Sony A7 V can shoot at 30 frames per second in electronic shutter mode with full autofocus. The Sony A1 II reaches 30 fps as well but with its fully stacked sensor providing even cleaner readout.

Pre-capture is another feature that depends entirely on fast sensor readout. The camera continuously buffers frames before you fully press the shutter, so the moment is captured even if your reaction is slightly late. This is invaluable for wildlife and sports photography.

Mechanical Shutter Elimination

Nikon made a bold decision with the Z8 and Z9 by removing the mechanical shutter entirely. This was only possible because their fully stacked sensor reads out fast enough to make electronic shutter the sole capture method without compromise. Fewer mechanical parts means less wear, less vibration, and one less component that can fail.

For photogrammetry users, this is a relevant development as well. Mechanical shutter wear from taking thousands of photos per scan has always been a background concern. And rolling shutter distortion from electronic shutter could affect 3D reconstruction quality. With faster readout speeds, both of these issues are gone. The recent generation of partially stacked sensors specifically marks a turning point here, because even mid-range cameras now have readout speeds fast enough for reliable electronic shutter use during scanning.

Overheating and Video Performance

Sensor readout speed and architecture also affect thermal management. Faster readout means less time spent actively reading data per frame, which can reduce heat generation during continuous shooting. More importantly, the way processing is distributed across the sensor stack affects how heat builds up during extended video recording.

The Sony A7 V, for example, can record 4K 60p video without overheating, something its predecessor struggled with. This is partly due to the partially stacked sensor design distributing processing load more efficiently.

Autofocus, Video Oversampling, and Other On-Sensor Processing

It is worth noting that modern sensors do far more than just capture light. Phase-detection autofocus points are embedded directly on the sensor surface. The quality and density of these points, combined with how quickly the sensor can read and process their data, determines autofocus performance.

Video oversampling also depends on sensor readout. When a camera reads out more pixels than the final video resolution and then downscales, it produces sharper and cleaner footage. This requires the sensor to read massive amounts of data very quickly, which is why cameras with faster sensors tend to produce better video quality.

In short, nearly every performance metric of a modern camera is tied to what happens on the sensor itself. Speed, autofocus, video quality, heat management, and dynamic range are all functions of the sensor's physical architecture and the signal processing built into it.

Stacked Sensors in Drones – Does It Matter for Aerial Photogrammetry?

Drones operate differently from handheld cameras. They typically fly at consistent speeds during mapping and scanning, which means extreme readout speeds are less critical for avoiding rolling shutter in aerial photogrammetry. Most modern drone sensors handle this well enough already.

That said, faster sensor technology could still benefit drones in other ways. Better autofocus for tracking shots, improved video quality through oversampling, and reduced heat generation during long flights. Whether fully stacked or partially stacked sensors will make their way into consumer drones remains to be seen, but the general direction of sensor development suggests it is only a matter of time.

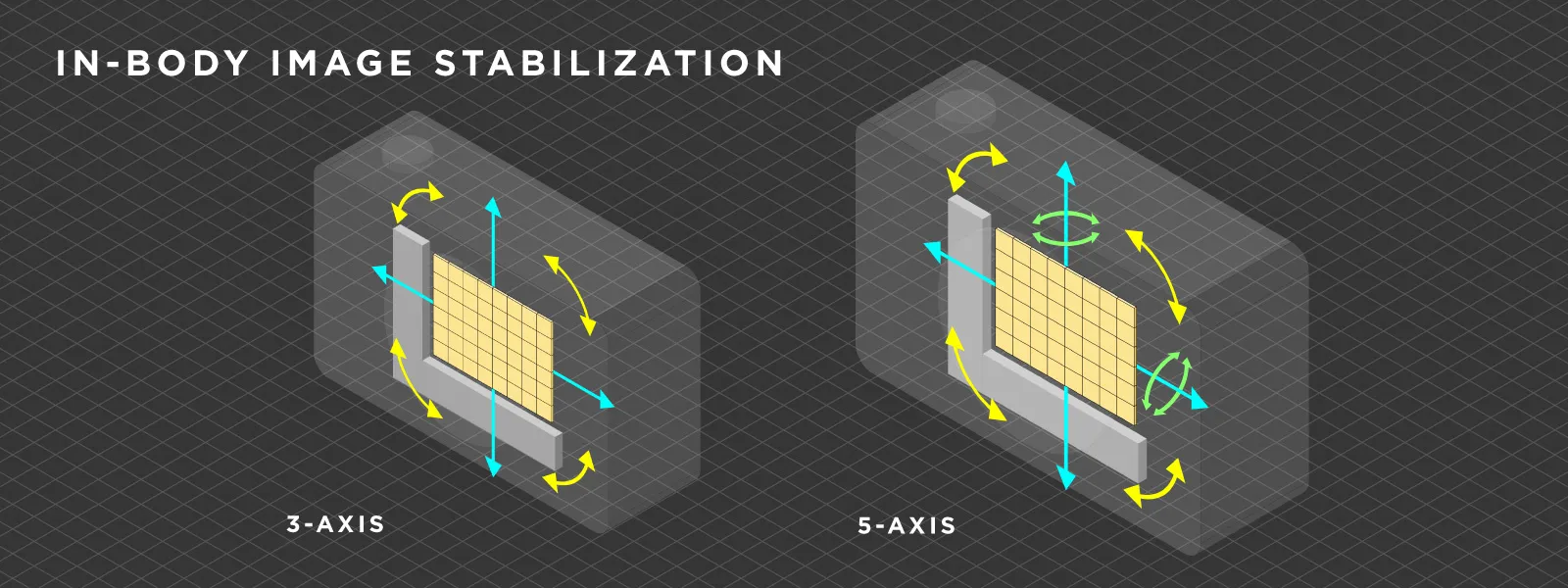

IBIS – In-Body Image Stabilization Explained

IBIS (In-Body Image Stabilization) is a system where the camera sensor itself is mounted on a mechanism that can move in multiple axes. When the camera detects motion from hand tremor or vibration, it shifts the sensor in the opposite direction to compensate, keeping the image stable.

Most modern IBIS systems stabilize across five axes: pitch, yaw, roll, and horizontal and vertical shift. The sensor is suspended on a platform controlled by electromagnets and gyroscopes, allowing it to make rapid micro-adjustments in real time.

The practical benefit is significant. IBIS turns any mounted lens into a stabilized lens. This is especially valuable for vintage lenses, manual lenses, or any optic that does not have built-in optical stabilization. For video shooters, IBIS provides a baseline level of smoothness that is always present regardless of the lens being used.

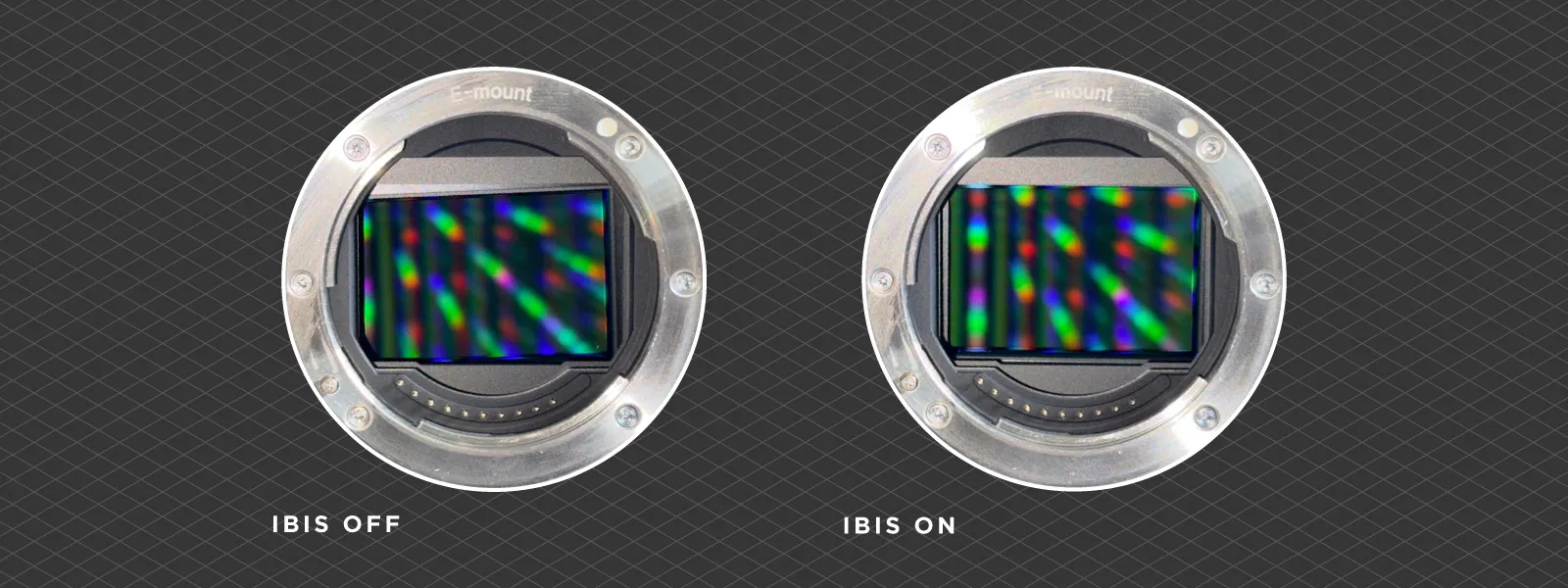

Why Is My Camera Sensor Tilted or Crooked? IBIS Sensors at Rest Explained

Why Is My Camera Sensor Tilted or Crooked? IBIS Sensors at Rest Explained

If you remove the lens from an IBIS-equipped camera and look at the sensor, you will notice it is not perfectly centered or level. It may appear tilted or shifted to one side. This is completely normal.

When the camera is powered off, the IBIS mechanism is not actively holding the sensor in position. The sensor simply rests wherever gravity and the suspension mechanism leave it. As soon as the camera powers on, the IBIS system centers and levels the sensor precisely.

This is one of the most common concerns among new camera owners. People see the floating sensor and assume something is broken. It is not. Every IBIS-equipped camera behaves this way. The sensor is designed to move freely, and it only locks into the correct position when the stabilization system is active. There is no defect, and it does not affect image quality in any way.

Pixel Shift Multi-Shot

Pixel Shift Multi-Shot

IBIS enables another useful feature: pixel shift multi-shot. Because the sensor can move with extreme precision, the camera can take multiple exposures while shifting the sensor by sub-pixel amounts between each shot. The resulting images are then combined to produce a single photograph with significantly higher resolution and color accuracy than a single frame.

This is made possible entirely by the IBIS mechanism. Without a sensor that can physically move in controlled increments, pixel shift would not be feasible.

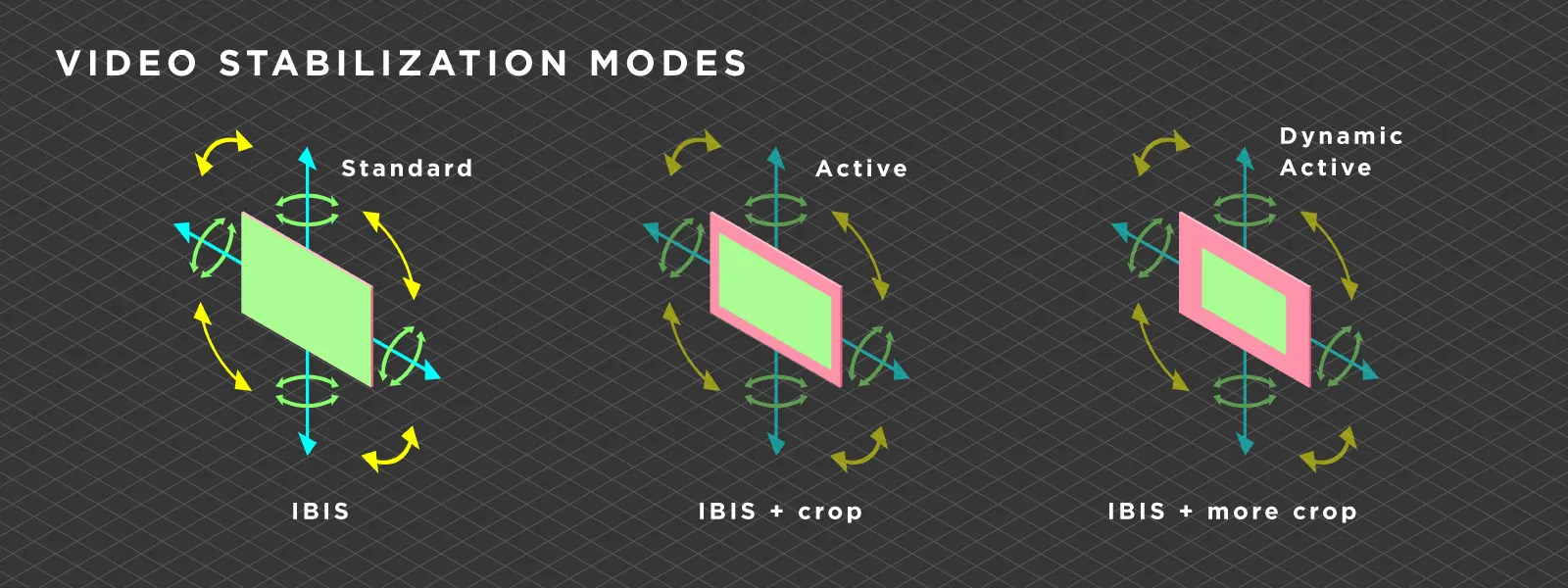

Video Stabilization Modes – Standard, Active, and Dynamic Active

Most cameras with IBIS offer multiple stabilization modes for video:

Standard mode uses only the physical sensor movement. It provides stabilization without any crop to the image.

Active mode combines IBIS with a slight crop of the footage. The additional margin around the frame allows the camera to apply computational stabilization on top of the physical correction. The result is noticeably smoother footage, but at the cost of a narrower field of view.

Dynamic active mode increases the crop further and applies more aggressive computational correction. This produces the smoothest results but reduces the effective resolution and field of view the most.

The tradeoff is always between stabilization amount and usable frame area.

Gyro Metadata Stabilization – The Post-Production Option

Gyro Metadata Stabilization – The Post-Production Option

Some cameras write gyroscope data as metadata into the video file. This records the exact motion of the camera during each frame. In post-production, software such as Gyroflow can read this gyro data and apply extremely precise stabilization.

The advantage of gyro-based stabilization is control. You decide exactly how much correction to apply, and the results can be excellent, often smoother than in-camera active stabilization modes. Because the stabilization is applied in editing, you also retain the full sensor readout during capture.

The downside is that post-production stabilization still requires cropping the frame, similar to active mode. Whether the resulting effective resolution is better or worse than using in-camera crop stabilization depends on the specific implementation and the amount of correction needed.

In my opinion, gyro metadata stabilization is the superior approach for those who are willing to invest the time in post. It provides more precision and more control than any in-camera crop mode. But for quick turnaround work, in-camera active stabilization is perfectly fine.

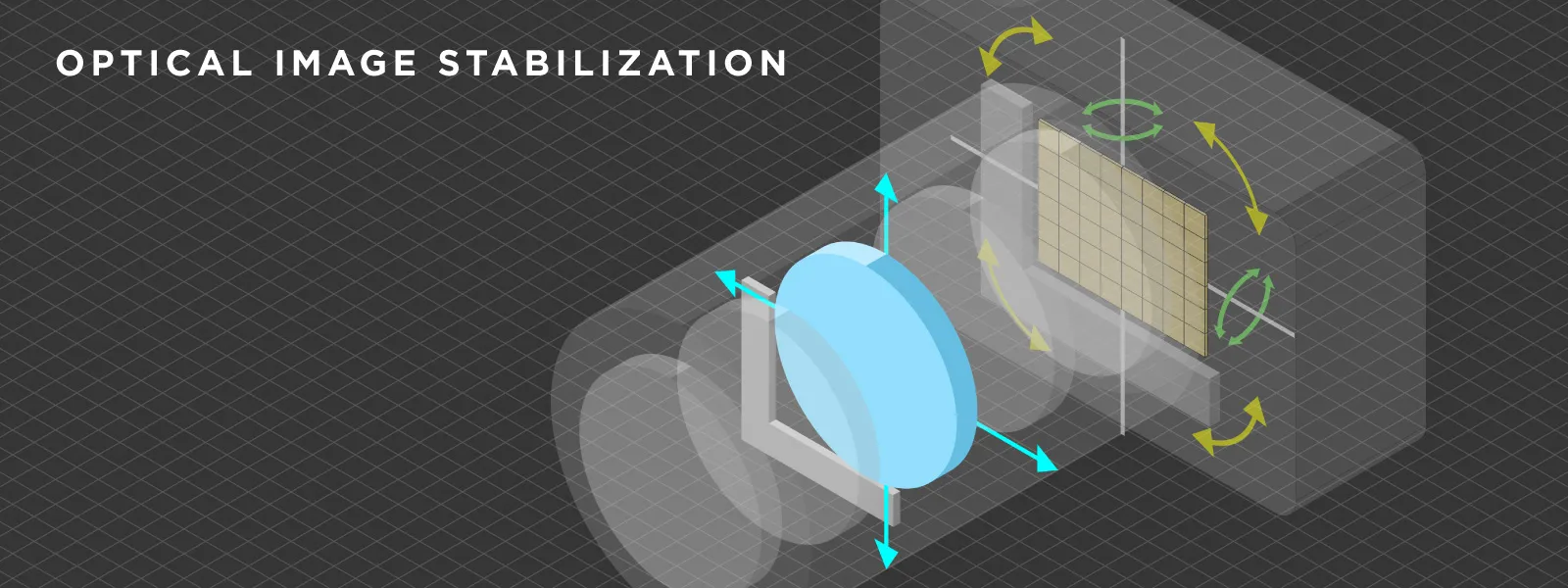

Lens-Based Optical Stabilization – Still Essential for Telephoto

IBIS is excellent for general stabilization, but it has physical limits. The sensor can only move so far on its platform, which means that at very long focal lengths, IBIS alone may not be sufficient to counteract the amplified shake.

This is where lens-based optical image stabilization remains essential. A stabilized telephoto lens shifts optical elements inside the lens barrel to correct for movement. This happens closer to the source of the shake (the front of the lens) and is more effective at long focal lengths than IBIS alone.

The best results come from using both systems together. Modern cameras and lenses communicate stabilization data in real time, allowing IBIS and lens-based OIS to work in tandem without conflicting. Each system handles the axes it is best suited for. Combined with gyro metadata for post-production refinement, this creates a stabilization chain that covers nearly every scenario.

The only stabilization method that consistently outperforms all of the above is a mechanical gimbal. Gimbals provide the smoothest possible footage by physically isolating the camera from all motion. Drones use gimbal stabilization almost exclusively, which is why they do not need IBIS or any other form of computational stabilization.

Conclusion

Conclusion

Camera sensor technology has reached an interesting inflection point. The gap between flagship and mid-range performance is narrowing quickly, partially stacked sensors are making serious speed accessible to more people, and IBIS has matured into something that genuinely changes how we shoot. None of these technologies work in isolation. They build on each other, and the camera you hold today is the sum of decades of incremental improvements that are finally converging into something that feels complete. At least until the next generation reminds us it was not.

Related Blog Posts

Our Related Posts

All of our tools and technologies are designed, modified and updated keeping your needs in mind

DJI Neo 2 Overview – Can It Do Photogrammetry?

DJI recently released the Neo 2, the successor to the small and lightweight Neo drone. Neo drones are compact and easy to fly, designed primarily for casual flying, social media content, and autonomous tracking shots. With the Neo 2, we are getting a few improvements to flight performance and camera

Understanding File Formats: Extensions, Encodings, and Photogrammetry Data

A file is a ubiquitous term meaning a digital object that carries information. It can contain any type of information: a photo, a video, a document, or even an entire program or game. Almost everything we interact with on a computer ultimately exists as a file.

When Photogrammetry Is the Wrong Tool

Photogrammetry has many use cases and is being used more and more across different industries. However, like all things, the photogrammetric process is not all-powerful and does have limitations.

Ready to get started with your project?

You can choose from our three different plans or ask for a custom solution where you can process as many photos as you like!

Free 14-day trial. Cancel any time.

Welcome to Pixpro

Sign in

And access your account.

.svg@webp)